120 court cases have been caught with AI hallucinations, according to new database

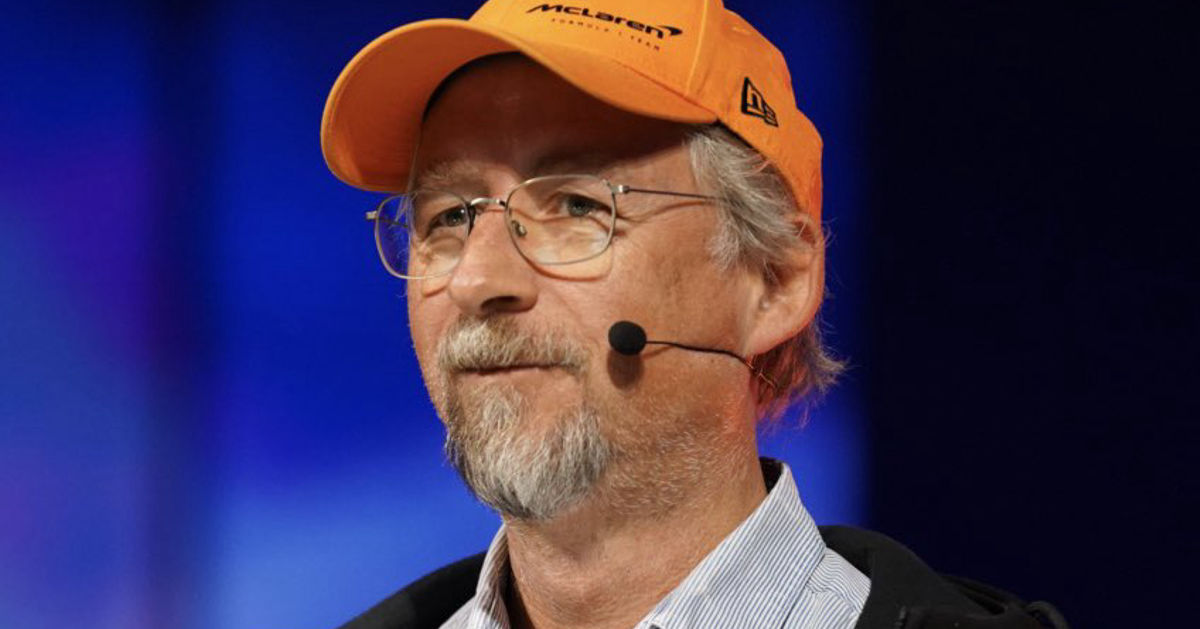

A new database from lawyer and data scientist Damien Charlotin tracks the increasing instances of AI hallucinations in court cases.

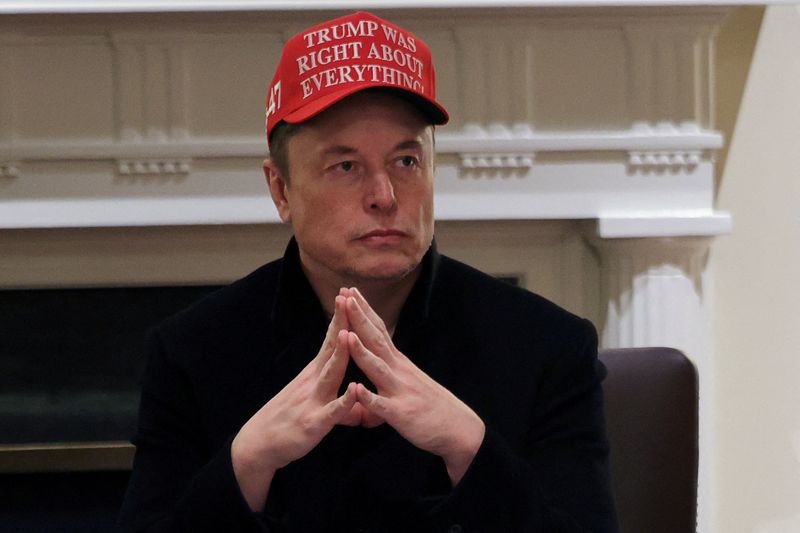

Lawyers representing Anthropic recently got busted for using a false attribution generated by Claude in an expert testimony.

But that's one of more than 20 court cases containing AI hallucinations in the past month alone, according to a new database created by French lawyer and data scientist Damien Charlotin. And those were just the ones that were caught in the act. In 2024, which was the first full year of tracking cases, Charlotin found 36 instances. That jumped up to 48 in 2025, and the year is only half way over. The database, which was created in early May, has 120 entries so far, going back to June 2023.

A database of AI hallucinations in court cases shows the increasing prevalence of lawyers using AI to automate the grunt work of building a case. The second oldest entry in the database is the Mata v. Avianca case which made headlines in May, 2023 when law firm Levidow, Levidow & Oberman got caught citing fake cases generated by ChatGPT.

The database tracks instances where an AI chatbot hallucinated text, "typically fake citations, but also other types of arguments," according to the site. That means fake references to previous cases, usually as a way of establishing legal precedent. It doesn't account for the use generative AI in other aspects of legal documents. "The universe of cases with hallucinated content is therefore necessarily wider (and I think much wider)," said Charlotin in an email to Mashable, emphasis original.

"In general, I think it's simply that the legal field is a perfect breeding ground for AI-generated hallucinations: this is a field based on load of text and arguments, where generative AI stands to take a strong position; citations follow patterns, and LLMs love that," said Charlotin.

The widespread availability of generative AI has made it drastically easier to produce text, automating research and writing that could take hours or even days. But in a way, Charlotin said, erroneous or misinterpreted citations for the basis of a legal argument are nothing new. "Copying and pasting citations from past cases, up until the time a citation bears little relation to the original case, has long been a staple of the profession," he said.

The difference, Charlotin noted, is that those copied and pasted citations at least referred to real court decisions. The hallucinations introduced by generative AI refer to court cases that never existed.

Judges and opposing lawyers are always supposed to check citations for their own respective responsibilities in the case. But this now includes looking for AI hallucinations. The increase of hallucinations discovered in cases could be the increasing availability of LLMs, but also "increased awareness of the issue on the part of everyone involved," said Charlotin.

Ultimately, leaning on ChatGPT, Claude, or other chatbots to cite past legal precedents is proving consequential. The penalties for those caught filing documents with AI hallucinations include financial sanctions, formal warnings, and even dismissal of cases.

That said, Charlotin said the penalties have been "mild" so far and the courts have put "the onus on the parties to behave," since the responsibility of checking citations remains the same. "I feel like there is a bit of embarrassment from anyone involved."

Disclosure: Ziff Davis, Mashable’s parent company, in April filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.