With the launch of o3-pro, let’s talk about what AI “reasoning” actually does

New studies reveal pattern-matching reality behind the AI industry's reasoning claims.

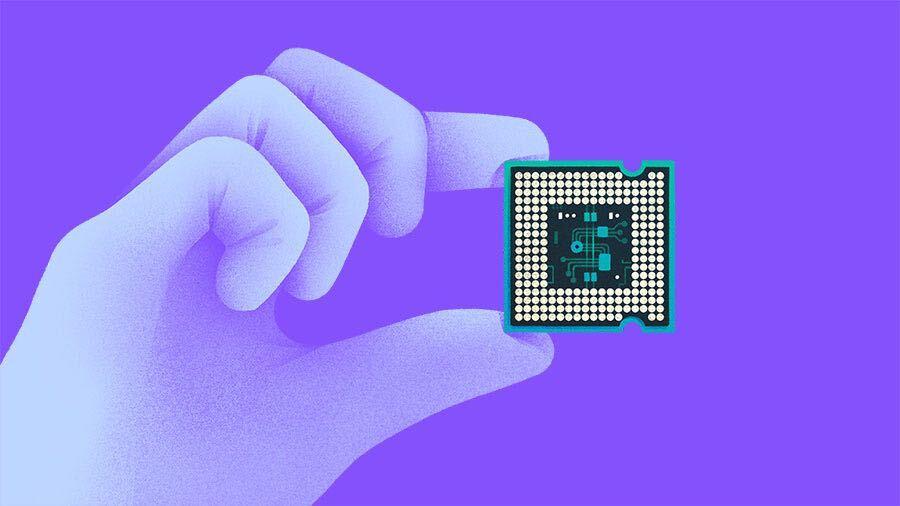

On Tuesday, OpenAI announced that o3-pro, a new version of its most capable simulated reasoning model, is now available to ChatGPT Pro and Team users, replacing o1-pro in the model picker. The company also reduced API pricing for o3-pro by 87 percent compared to o1-pro while cutting o3 prices by 80 percent. While "reasoning" is useful for some analytical tasks, new studies have posed fundamental questions about what the word actually means when applied to these AI systems.

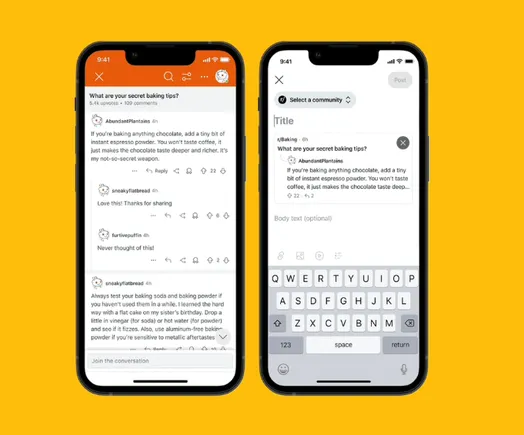

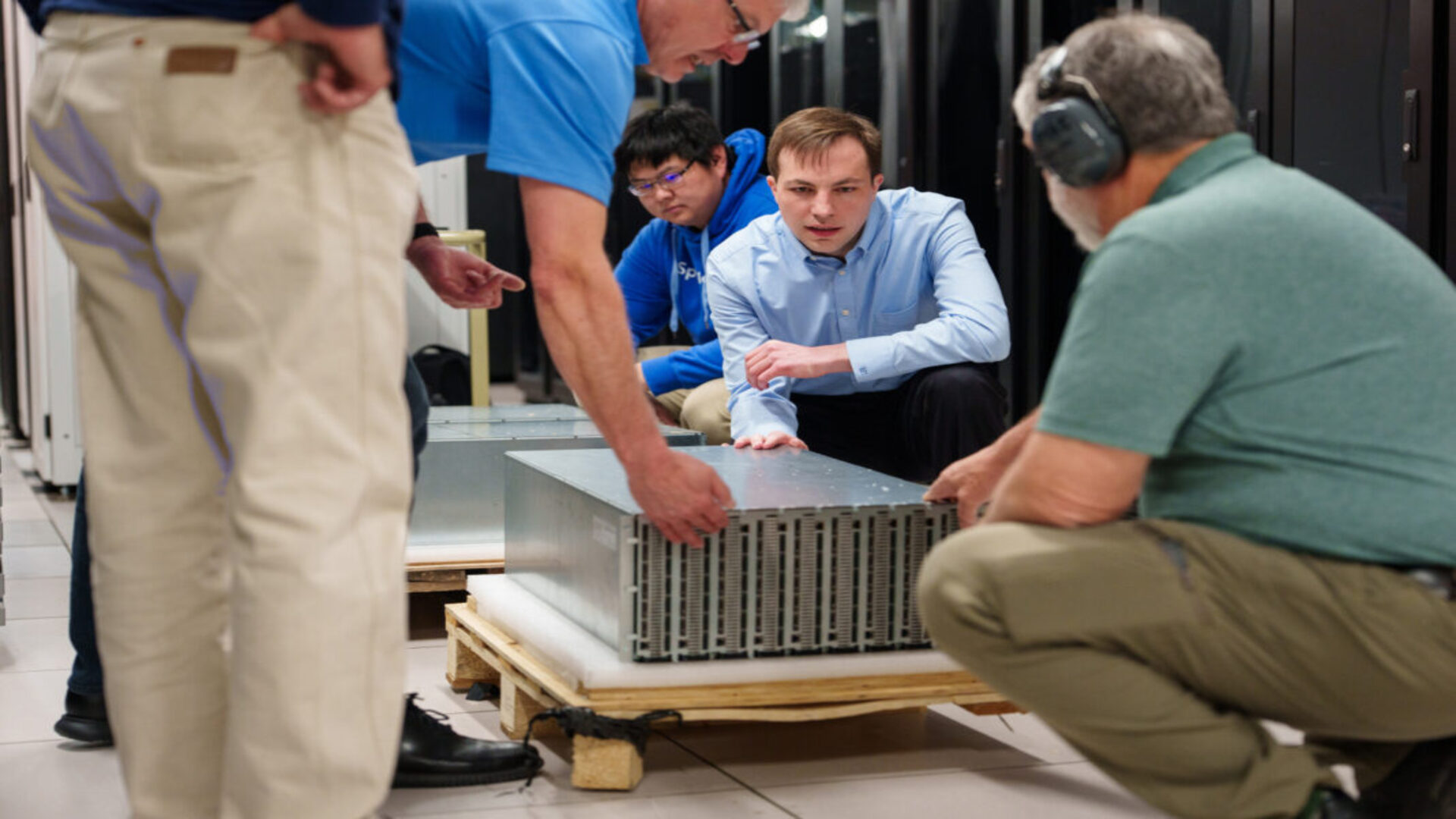

We'll take a deeper look at "reasoning" in a minute, but first, let's examine what's new. While OpenAI originally launched o3 (non-pro) in April, the o3-pro model focuses on mathematics, science, and coding while adding new capabilities like web search, file analysis, image analysis, and Python execution. Since these tool integrations slow response times (longer than the already slow o1-pro), OpenAI recommends using the model for complex problems where accuracy matters more than speed. However, they do not necessarily confabulate less than "non-reasoning" AI models (they still introduce factual errors), which is a significant caveat when seeking accurate results.

Beyond the reported performance improvements, OpenAI announced a substantial price reduction for developers. O3-pro costs $20 per million input tokens and $80 per million output tokens in the API, making it 87 percent cheaper than o1-pro. The company also reduced the price of the standard o3 model by 80 percent.

![X Highlights Back-to-School Marketing Opportunities [Infographic]](https://imgproxy.divecdn.com/dM1TxaOzbLu_kb9YjLpd7P_E_B_FkFsuKp2uSGPS5i8/g:ce/rs:fit:770:435/Z3M6Ly9kaXZlc2l0ZS1zdG9yYWdlL2RpdmVpbWFnZS94X2JhY2tfdG9fc2Nob29sMi5wbmc=.webp)